Nowadays AI has become our go to tool for everything from coding to creative writing. However, the quality of the “output” that you get from your Gen AI model depends heavily upon the quality of the “input”(Prompt) that is provided to the AI Model.

Think of an LLM (Large Language Model) as a brilliant but incredibly literal intern. If you give vague instructions, you’ll get a vague result. Here are few tips to improve your prompts which in turn will improve your LLM output

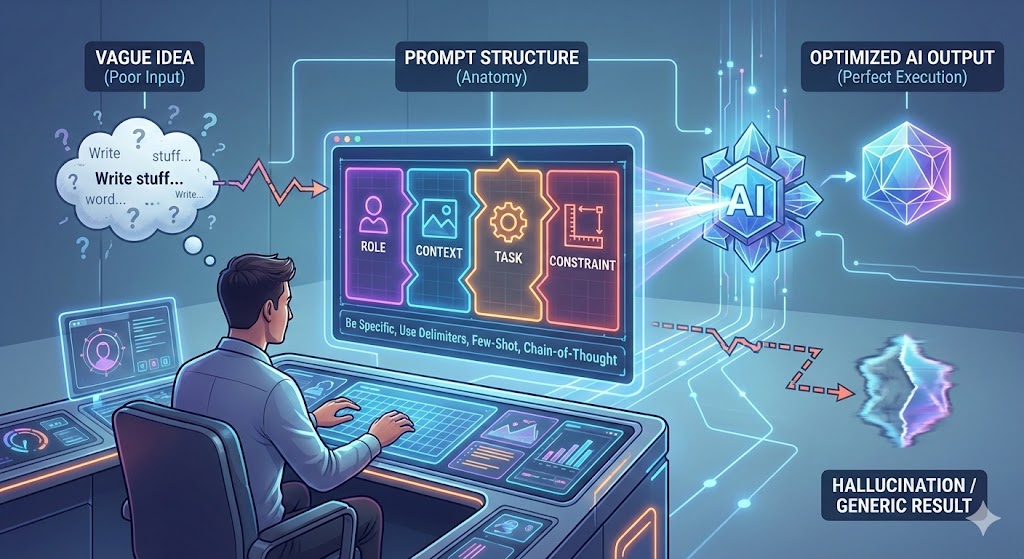

1. The Anatomy of a Perfect Prompt

A high-performing prompt isn’t just a sentence; it’s a structured set of instructions. To get the best results, try to include these four elements:

• Role: Tell the AI who it should be (e.g., “Act as a senior SEO strategist”).

• Context: Explain the background (e.g., “We are launching a new sustainable footwear brand”).

• Task: Define the specific action (e.g., “Write three meta descriptions”).

• Constraint: Set the boundaries (e.g., “Keep it under 155 characters and use a playful tone”).

2. Be specific in the instructions

Specificity is the antidote to “AI Hallucinations.” Instead of asking the AI to “write a blog post,” guide it through the structure you want.

Bad Prompt: “Write a post about healthy eating.”

Better Prompt: “Write a 500-word blog post about the benefits of a Mediterranean diet for office workers. Include a section on quick lunch prep and use a supportive, professional tone.”

3. Use Delimiters for Clarity

when your prompt Includes large chunks of external text—like an article you want summarized or data you need analyzed—use delimiters. Symbols like triple quotes (“””), XML tags (<text></text>), or triple dashes (—) help the AI distinguish between your instructions and the data it needs to process.

Example: “Summarize the text delimited by triple quotes into three concise bullet points: “”” [Your article content] “”””

4. Leverage “Few-Shot” Prompting

One of the most powerful prompt engineering techniques is “showing” rather than just “telling.” This is called Few-Shot Prompting.

If you need the AI to write in a very specific format or style, provide two or three examples of that desired output just before your actual request. The model will recognize the pattern and replicate it.

5. Utilize Chain-of-Thought (CoT)

For complex logic, coding problems, or math, ask the AI to “think step-by-step.”This technique forces the model to explicitly show its reasoning path before arriving at a final answer. It significantly reduces errors in logic and allows you to see where the AI might have gone off track.

Quick Optimization Techniques

Here are three fast ways to improve your outputs instantly:

• Persona Adoption: Use this for creative or professional tasks. It forces the AI to adjust its tone, vocabulary, and perspective to fit a specific niche.

• Negative Constraints: Explicitly tell the AI what not to do. (e.g., “Do not use corporate jargon like ‘synergy'” or “Do not include hashtags”).

• Output Formatting: Don’t settle for paragraphs if you need data. Explicitly request the answer in a specific format like Markdown, JSON, or a bulleted list.

The Secret Sauce: Iteration

Rarely is the very first prompt the best one. Prompt engineering is an iterative process.If the AI misses the mark, don’t just start a new chat. Give it feedback like a human colleague. Say, “That was a good start, but the tone is too formal. Rewrite the second paragraph to be punchier and more casual.”